High-Performance SSDs for Generative AI: How to Choose? (Complete Guide)

As artificial intelligence (AI) evolves rapidly, storage has become a critical part of modern AI systems. Whether you’re generating text, designing images, or producing animations, these workloads require fast access to massive amounts of data in real time. That’s why SSDs are no longer “just storage”—they are now a key component that directly impacts system performance, responsiveness, and workflow efficiency.

Table of Contents

- What Is Generative AI?

- The Role of SSDs in Generative AI

- Who Needs a High-Performance SSD?

- Why Traditional Storage Can’t Meet Generative AI Demands

- How to Choose an SSD for Generative AI

- T-CREATE CLASSIC H514 M.2 PCIe 5.0 SSD

- Conclusion

What Is Generative AI?

Generative AI is a type of artificial intelligence built on machine learning and deep learning. By training on large datasets, it learns language patterns, visual features, and content structures—then generates brand-new output such as text, images, music, videos, and even code.

Unlike traditional AI systems that typically return a single predefined response, the core value of generative AI lies in its creative capability. Instead of only providing fixed answers based on existing data, generative AI can produce original and practical content based on user intent. As a result, it is widely used across content creation, design production, software development, marketing planning, and education/training.

Popular generative AI tools today include:

- ChatGPT (text generation and conversational AI)

- Gemini (multimodal AI)

- Perplexity (AI search and Q&A)

- Sora (text-to-video generation)

- Midjourney (AI image generation)

- Copilot (coding and productivity assistance)

The Role of SSDs in Generative AI

In generative AI workloads, top-tier GPUs matter—but SSDs are also essential. High-capacity, high-performance SSDs don’t just store large training datasets and model files. They also improve workflow efficiency by reducing storage bottlenecks and keeping data moving quickly to memory and GPUs.

Modern SSDs can optimize performance through caching behavior (writing to high-speed cache first and then committing data in batches), which helps reduce latency and improve overall data handling. For AI training and inference, bandwidth, IOPS, and low latency are the core requirements for an AI-ready storage system.

Key SSD performance factors for generative AI:

- High Bandwidth: Improves load times for large datasets and AI models

- High IOPS (Input/Output Operations Per Second): Speeds up simultaneous reads/writes across many small files and random access patterns

- Low Latency: Reduces response time and improves real-time inference and interactive AI experiences

Who Needs a High-Performance SSD?

When your workflow involves heavy data access and model computation, storage performance directly affects productivity and responsiveness. Below are common generative AI scenarios that typically require faster SSD performance:

Large Language Model (LLM) Training

Many generative AI tools—such as ChatGPT, Bard, and Bing Chat—are built on Large Language Models (LLMs). During training, LLM systems repeatedly read massive text corpora and model-related files. If storage can’t keep up, GPUs may sit idle while waiting for data—wasting expensive compute resources.That’s why a high-performance SSD with high bandwidth, high IOPS, and low latency is essential to feed data to memory and GPUs efficiently, keeping training pipelines smooth and maximizing GPU utilization. In short, storage is a core part of the LLM infrastructure.

Image and Animation Generation

Image and animation generation often requires frequent loading of large model files and extensive project assets. For workflows involving 4K+ resolution, multi-layer projects, or long frame sequences, read/write behavior becomes highly random and intensive.In these cases, SSD performance—especially IOPS, bandwidth, and latency—directly impacts model load times and generation speed. If storage becomes a bottleneck, GPUs may wait for data, processing time increases, and workflow fluidity suffers. That’s why a high-capacity, high-performance SSD is a foundational upgrade for image and animation generation.

Why Traditional Storage Can’t Meet Generative AI Demands

As generative AI models scale from billions (7B) of parameters to tens or even hundreds of billions, storage workloads grow dramatically. This puts higher demands on speed, IOPS, and latency.

- HDDs (hard disk drives) rely on spinning platters, which results in high latency and weak random access—making them unsuitable for the fast, continuous data flow GPUs require for AI training and inference.

- SATA SSDs are faster than HDDs, but still limited by interface bandwidth and read/write performance. Under multi-task workloads or intensive inference, SATA can become a bottleneck.

To fully unlock GPU performance and support large model loading, dataset streaming, and real-time inference, generative AI systems increasingly rely on NVMe SSDs, which provide high bandwidth, high IOPS, and low latency.

Storage Performance Comparison (Typical)

| Storage Type | Latency | IOPS (Approx.) | Bandwidth (Approx.) |

|---|---|---|---|

| HDD | ~5-10ms | ~80-160 IOPS | ~100-200 MB/s |

| SATA SSD | ~0.1-0.5ms | ~50K-100K IOPS | ~500-550 MB/s |

| PCIe NVMe SSD | ~0.01-0.05ms👍 | ~100K-1000K IOPS👍 | ~3000-14000 MB/s👍 |

How to Choose an SSD for Generative AI

When selecting an SSD for generative AI workloads (AI PC, AI workstation, creator workflows, or development rigs), focus on these key factors:

- High Random Read/Write Performance (IOPS)

- Low Latency + High Stability (consistent sustained performance)

- High Bandwidth (fast sequential speeds for large models and datasets)

- Large Capacity (space for datasets, checkpoints, and multiple models)

- PCIe Interface (NVMe recommended; PCIe Gen4/Gen5 for high-end AI workflows)

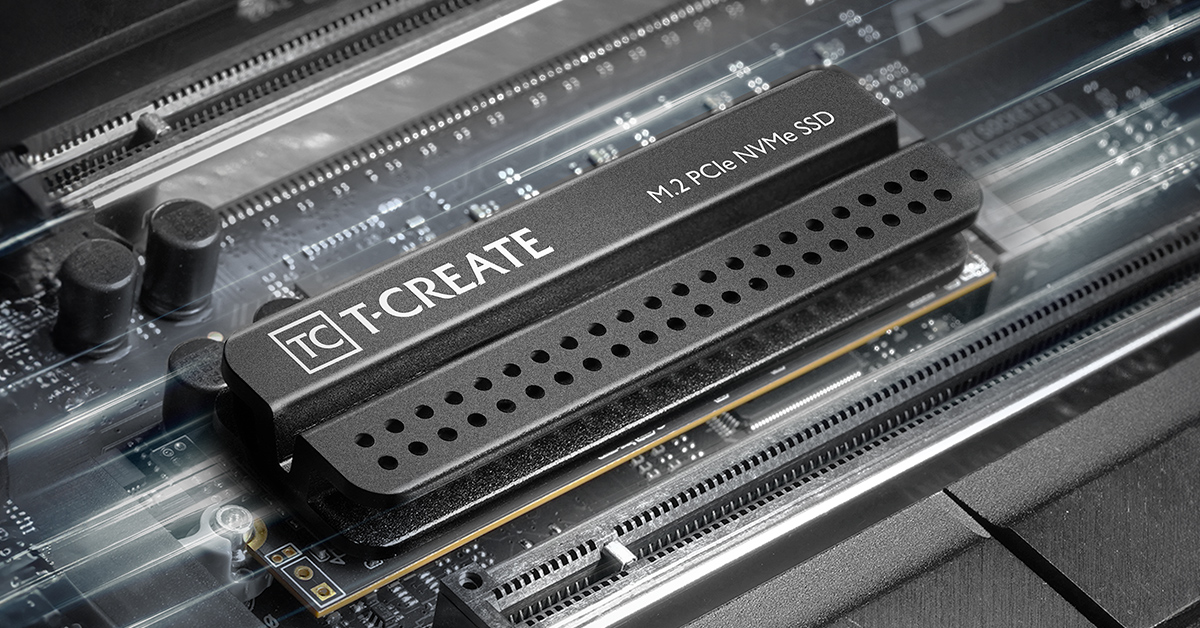

T-CREATE CLASSIC H514 M.2 PCIe 5.0 SSD

The first Gen5 SSD in the T-CREATE creator lineup, delivering up to 14,200 / 13,500 MB/s read/write speeds.

- Up to 4TB capacity, supporting deployment of 7B to 175B-parameter large language models—ideal for editing, photo work, VFX, and 3D modeling workflows.

- Advanced 6nm controller designed to reduce read/write latency and improve overall system efficiency and platform synergy.

Conclusion

In the fast-growing AI era, storage has become more important than ever. Training large models, generating images and video, and running real-time search or analytics all require constant high-speed reads and writes. If storage can’t keep up, even the most powerful (and expensive) compute resources may be forced to wait—reducing overall productivity.

As a result, SSD technology continues to evolve toward higher capacity, faster performance, and better efficiency, supporting everything from single-machine workflows to large-scale data centers. With data volumes rising, SSDs are no longer optional accessories—they are a critical part of AI infrastructure. Choosing the right SSD improves data throughput, boosts system responsiveness, and builds a stable, scalable foundation for generative AI applications.

RELATED Blog

1

9

22.Jan.2026

A Beginner’s Guide: External vs. Internal SSDs

05.Sep.2025

T-CREATE P34F: The SSD with Apple Find My

24.Jul.2025